Advanced Level Business Central Interview Questions

Advanced-level Microsoft Dynamics 365 Business Central interview questions evaluate a candidate’s ability to design, optimize, and scale real-world ERP solutions. At this level, interviewers focus on architecture decisions, performance tuning, complex customizations, integrations, and long-term maintainability.

Candidates are expected to justify why a solution is designed in a certain way, understand internal posting and data flow, and write or review AL code with performance, security, and upgrade safety in mind.

Architecture & Application Design

1. Explain the Overall Architecture of Business Central

Microsoft Dynamics 365 Business Central follows a modern, multi-tier ERP architecture designed for scalability, security, and continuous updates. At a high level, the architecture consists of the presentation layer, application/service layer, and data layer, with clear separation of responsibilities.

The presentation layer includes the Web Client, Mobile App, and integrations consuming APIs. This layer is intentionally thin and contains no business logic. All validations and calculations are handled centrally to ensure consistency across all clients.

The application layer is hosted by the Business Central Service Tier. This is where AL code executes, business rules are enforced, events are published, and extensions interact with the Base Application. The application layer is split logically into the System Application, Base Application, and custom extensions, ensuring isolation between Microsoft code and partner or customer code.

The data layer uses SQL Server or Azure SQL Database. Direct database access is not allowed; all data access goes through the service tier. This design protects data integrity, enforces permissions, and supports SaaS multi-tenancy.

This architecture enables upgrade safety, cloud scalability, strong security boundaries, and seamless integration with Azure services such as Power Platform and Application Insights.

2. Why Is Extension-Based Development Mandatory in Business Central?

Extension-based development is mandatory because Business Central is designed as a continuously updated SaaS ERP. Direct modification of standard objects would break during upgrades and make long-term maintenance impossible.

Extensions isolate custom functionality into separate packages that interact with the Base Application through well-defined mechanisms such as events, interfaces, and APIs. This allows Microsoft to update the standard application without overwriting customer-specific logic.

From an architectural perspective, extension-based development enforces clean separation of concerns. Standard ERP logic remains stable, while custom logic evolves independently. This dramatically reduces technical debt and upgrade risk.

In real projects, extension-based design also improves testability, version control, and collaboration across teams. It is a foundational principle of Business Central’s cloud-first strategy.

3. Explain Base App vs System App vs Custom Extensions

Business Central is logically divided into multiple application layers to enforce modularity and upgrade safety.

The System Application contains core platform-level functionality such as error handling, email, notifications, and basic framework services. It is tightly coupled with the platform and rarely extended directly.

The Base Application contains Microsoft’s standard ERP logic, including finance, sales, purchasing, inventory, manufacturing, and service management. This is where standard business processes are implemented.

Custom extensions are developed by partners or customers to add or modify behavior without changing the Base Application. They interact with the system using events, interfaces, and APIs.

Understanding this separation is critical when making design decisions, because placing logic in the wrong layer can lead to poor maintainability and upgrade issues.

4. How Do You Decide Where Business Logic Should Live?

Deciding where business logic should reside is a key architectural responsibility. The guiding principle is scope and reusability.

Logic related to data integrity and validation should be placed in table triggers to ensure it always executes, regardless of how data is entered. Reusable or process-oriented logic should be placed in codeunits, where it can be called from multiple contexts.

Page triggers should only contain UI-specific logic such as visibility, enabling actions, or user interaction handling. Placing core logic in pages leads to duplication and inconsistent behavior.

This layered approach improves maintainability, testability, and long-term scalability of the solution.

5. How Do You Design for Upgrade Safety?

Designing for upgrade safety starts with accepting that standard objects must never be modified. All customizations must be implemented through extensions, events, and APIs.

Developers should rely on publisher events provided by Microsoft to extend behavior. When events are not available, design patterns such as interfaces or request-for-event approaches should be used instead of workarounds.

Data changes across versions are handled using upgrade codeunits, which migrate data safely during extension upgrades. Obsolete attributes are used to deprecate fields or procedures without breaking existing code.

Upgrade-safe design ensures that solutions remain functional across major releases and significantly reduces long-term maintenance costs.

Performance & Scalability

6. How Do You Analyze Performance Issues in Business Central?

Performance analysis starts with identifying slow user actions using the Performance Profiler. SQL telemetry and execution traces help locate bottlenecks.

Common causes include missing filters, incorrect keys, and excessive database calls.

7. Explain the Role of Keys and Indexes in Performance

Keys define sort order and indexing. Proper key selection dramatically improves filtering and looping performance.

Using SETCURRENTKEY before looping ensures SQL uses the optimal index.

8. How Do You Optimize Large Data Processing?

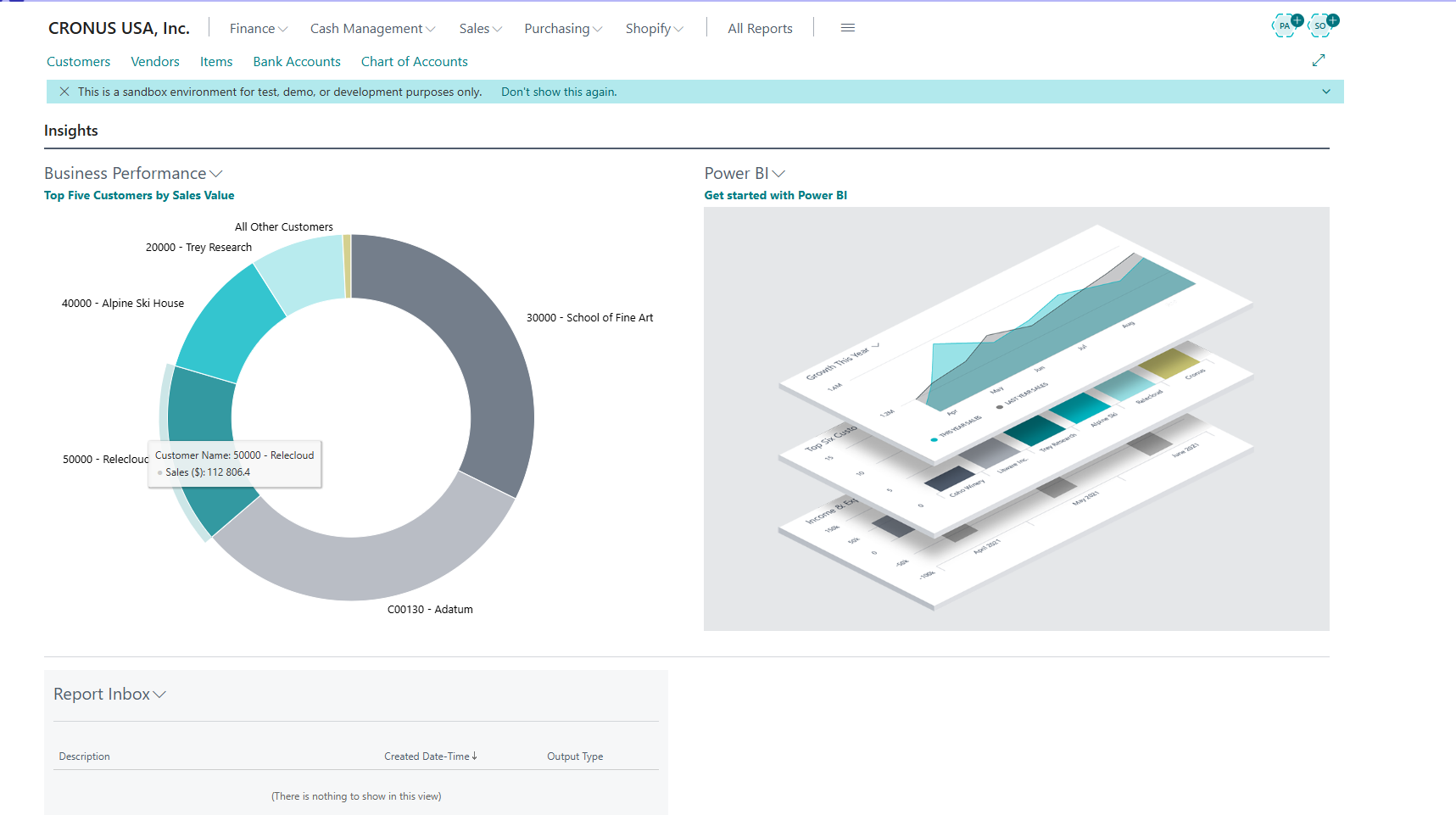

Optimization involves filtering early, using FINDSET, SetLoadFields, and temporary tables. Avoid nested loops over large datasets.

Batch processing and background tasks are preferred for heavy operations.

9. Explain Locking and Concurrency in Business Central

Business Central relies on SQL locking. Poorly designed code can cause blocking and deadlocks.

Developers must minimize transaction scope and avoid unnecessary COMMITs.

10. What Causes Deadlocks and How Do You Prevent Them?

Deadlocks occur when transactions lock resources in conflicting order. Prevention includes consistent table access order and shorter transactions.

Advanced AL & Code Design

11. How Do You Structure a Large AL Solution?

Large solutions are split into multiple extensions with clear responsibilities. Shared logic is isolated into common libraries.

This improves maintainability and team collaboration.

12. Explain Event-Driven Architecture with a Real Example

Event-driven architecture allows custom logic to react to standard events.

[EventSubscriber(ObjectType::Codeunit, Codeunit::"Sales-Post", 'OnAfterPostSalesDoc', '', false, false)]

local procedure AfterSalesPost(var SalesHeader: Record "Sales Header")

begin

// Custom logic after posting

end;

This ensures upgrade-safe customization.

13. Difference Between Integration Events and Business Events

Integration events are for extensions; business events expose ERP actions to Power Platform.

Choosing correctly affects extensibility and integrations.

14. How Do You Implement Cross-Company Logic?

Cross-company logic uses ChangeCompany carefully. It must avoid locking and performance issues.

15. How Do You Handle Error Management in Complex Processes?

TryFunctions and structured error handling allow graceful failures without data corruption.

Posting & Data Flow

16. Explain Complete Data Flow During Sales Posting

Sales posting creates records across Sales Invoice, Customer Ledger, G/L Entry, Item Ledger, and Value Entry.

Understanding this flow is essential for troubleshooting and customization.

17. How Do You Extend Posting Logic Safely?

Posting logic is extended through events, never by modifying posting codeunits.

[EventSubscriber(ObjectType::Codeunit, Codeunit::"Gen. Jnl.-Post Line", 'OnAfterInsertGLEntry', '', false, false)]

local procedure AfterGLEntry(var GLEntry: Record "G/L Entry")

begin

// Extend posting

end;

18. How Do You Flow Custom Fields into Ledger Entries?

Flowing custom fields from documents to ledger entries is a common real-world requirement and a classic advanced-level interview question. The key principle is that ledger entries should never be updated manually after posting. Instead, values must be transferred during the posting process itself.

The correct design approach starts by adding the custom field using a table extension on the source table, such as Sales Header or Sales Line. If the value needs to be stored permanently for audit or reporting purposes, the same field must also be added to the target ledger table, such as G/L Entry, Customer Ledger Entry, or Value Entry.

The transfer logic is implemented using event subscribers on the relevant posting codeunits, for example Sales-Post or Gen. Jnl.-Post Line. Microsoft provides events like OnBeforeInsertGLEntry or OnAfterInsertGLEntry specifically for this purpose.

Example:

[EventSubscriber(ObjectType::Codeunit, Codeunit::"Gen. Jnl.-Post Line", 'OnAfterInsertGLEntry', '', false, false)]

local procedure CopyCustomField(

var GLEntry: Record "G/L Entry";

GenJnlLine: Record "Gen. Journal Line")

begin

GLEntry."Custom Reference" := GenJnlLine."Custom Reference";

end;

This approach ensures that the data flows naturally with the posting logic, remains upgrade-safe, and preserves the integrity of financial records.

19. Explain Costing and Valuation Impact

Costing and valuation are core ERP concepts and are critical areas of focus in advanced Business Central interviews. Inventory costing determines how item movements affect financial statements and profitability.

Business Central uses Value Entries to store cost-related information for inventory transactions. These entries capture actual cost, expected cost, adjustments, and revaluations. Item Ledger Entries track quantity, while Value Entries track financial impact.

Different costing methods such as FIFO, Average, or Standard Cost influence how costs are calculated and when they are recognized in the General Ledger. Incorrect understanding or customization of costing logic can result in inaccurate Cost of Goods Sold (COGS) and inventory valuation.

Advanced candidates are expected to understand how revaluations, adjustments, and cost posting to G/L work together to maintain financial accuracy.

20. How Do You Debug Posting Issues?

Debugging posting issues requires a systematic understanding of Business Central’s posting architecture. Advanced professionals do not rely on trial and error; instead, they trace data flow step by step.

The process typically starts by identifying the document being posted and reviewing its posting setup, such as posting groups, dimensions, and VAT configuration. Next, ledger entries created during posting are analyzed, including G/L Entries, Customer or Vendor Ledger Entries, Item Ledger Entries, and Value Entries.

Developers often use the debugger, temporary MESSAGE statements, or telemetry logs to identify where logic behaves unexpectedly. Reviewing Detailed Ledger Entries is especially useful when reconciling balances or unexpected totals.

A strong understanding of posting codeunits and events allows faster root-cause analysis and safer fixes.

Integrations & External Systems

21. How Do You Design REST APIs in Business Central?

Designing REST APIs in Business Central requires balancing data exposure, security, and long-term compatibility. APIs are typically implemented using API pages, which expose data in a standardized, versioned format.

A well-designed API clearly defines the entity, supported operations, and data scope. Versioning is critical to avoid breaking existing integrations when changes are introduced.

Example:

page 50100 CustomerAPI

{

PageType = API;

APIPublisher = 'scrutnlearn';

APIGroup = 'customers';

APIVersion = 'v1.0';

EntityName = 'customer';

EntitySetName = 'customers';

SourceTable = Customer;

}

Advanced candidates are expected to understand API limits, filtering, pagination, and the importance of avoiding direct exposure of sensitive or transactional-only fields.

22. How Do You Handle Authentication and Security in Integrations?

Authentication and security are critical in Business Central integrations, especially in SaaS environments. Business Central relies on Azure Active Directory (Azure AD) for authentication using OAuth 2.0.

External systems authenticate using registered Azure AD applications, which are assigned appropriate permission sets inside Business Central. This ensures that integrations can access only the data they are authorized to use.

Advanced professionals must design integrations with the principle of least privilege, implement proper error handling, and ensure sensitive data is never exposed unintentionally. Security design is as important as functional correctness in enterprise integrations.

23. Explain Dataverse Integration Strategy

Dataverse plays a strategic role in the Business Central ecosystem as an integration and extension platform, not as a replacement for the ERP database. Advanced interviewers expect candidates to clearly understand this distinction.

Business Central remains the system of record for transactional and financial data such as postings, ledger entries, and inventory valuation. Dataverse is used to store complementary data that supports automation, user experience, and cross-application scenarios.

A well-designed Dataverse integration selectively synchronizes data from Business Central using standard connectors, APIs, or business events. Only required entities and fields are exposed to avoid duplication and data inconsistency.

Dataverse is especially useful when Business Central needs to interact with Power Apps, Power Automate, Dynamics 365 apps, or external systems that do not require full ERP complexity.

Advanced architects design Dataverse integrations with clear ownership rules, conflict resolution strategies, and performance considerations to ensure scalability and long-term maintainability.

24. How Do You Handle High-Volume Integrations?

High-volume integrations require careful architectural planning to avoid performance degradation and data inconsistencies. In advanced Business Central implementations, integrations must be designed to handle large data volumes without blocking users or core ERP processes.

The preferred approach is asynchronous processing using background sessions, job queues, or external message queues. This decouples integration workloads from user actions and improves system responsiveness.

Batch processing is commonly used to group records and reduce the number of API calls. Retry logic and error handling mechanisms are essential to manage transient failures without data loss.

Advanced solutions also implement idempotency to prevent duplicate data creation and use logging tables or telemetry to monitor integration health.

Handling high-volume integrations successfully demonstrates a strong understanding of scalability, reliability, and enterprise integration patterns.

25. How Do You Ensure Data Consistency Across Systems?

Ensuring data consistency across systems is one of the most critical challenges in ERP integrations. Advanced interviewers expect candidates to explain both technical and process-level strategies.

In Business Central integrations, data consistency is achieved by defining a clear system of record for each data entity. Business Central typically owns financial and transactional data, while external systems may own auxiliary or operational data.

Techniques such as idempotent APIs, unique external identifiers, and controlled synchronization intervals help prevent duplicate or conflicting records. Validation rules are applied before data is committed to ensure integrity.

Reconciliation processes, audit logs, and exception handling mechanisms are used to detect and resolve mismatches. In enterprise environments, consistency is maintained through monitoring, alerts, and periodic data audits.

Strong data consistency design protects financial accuracy, reporting reliability, and regulatory compliance.

Power Platform & Automation

26. When Should You Use Power Platform Instead of AL?

Power Platform is ideal for workflows, UI extensions, and analytics.

AL is reserved for core ERP logic.

27. Explain Business Events with Example

[BusinessEvent(false)]

procedure OnSalesPosted()

begin

end;

Business events enable external automation.

Testing & DevOps

28. How Do You Implement Automated Testing in Business Central?

Automated testing in Business Central is implemented using test codeunits written in AL. These tests validate business logic, posting behavior, and regression scenarios without manual intervention. Automated tests are critical in SaaS environments where frequent updates are released.

Test codeunits simulate real business scenarios by creating test data, executing processes such as posting documents, and validating expected outcomes using assertions. Microsoft provides a standard test framework and libraries that support isolation, rollback, and repeatable execution.

Well-designed automated tests reduce regression risks, improve confidence during upgrades, and support continuous delivery pipelines.

29. Explain CI/CD for Business Central in Detail

CI/CD (Continuous Integration and Continuous Deployment) automates the build, test, and deployment of Business Central extensions. In real projects, CI/CD pipelines are commonly implemented using Azure DevOps or GitHub Actions.

A typical pipeline includes compiling AL code, running automated tests, performing code analysis, and deploying extensions to sandbox or production environments. CI/CD ensures consistent deployments, reduces human error, and accelerates release cycles.

Advanced candidates are expected to understand how pipelines integrate with version control, environment strategies, and approval workflows.

30. How Do You Manage Multiple Environments in Business Central?

Business Central environments such as development, sandbox, test, and production are used to isolate risk and control changes. Each environment serves a specific purpose in the application lifecycle.

Development and sandbox environments are used for coding and testing, while production environments host live business data. Proper environment management ensures stability, controlled deployments, and safe experimentation.

31. How Do You Design Permission Sets for Large Organizations?

In large organizations, permission design must balance security and usability. Permission sets should be role-based and aligned with business responsibilities rather than individual users.

Advanced implementations use layered permission sets, separating read-only access, posting permissions, and administrative rights. This approach simplifies maintenance and auditing while enforcing the principle of least privilege.

32. How Do You Secure Sensitive Data in Business Central?

Securing sensitive data involves data classification, permission control, and compliance with security policies. Business Central supports data classification attributes that help identify sensitive information.

Access to sensitive fields is restricted using permissions, and integrations are secured using authentication and authorization mechanisms. In enterprise environments, Data Loss Prevention (DLP) policies are also applied.

33. How Do You Scale Business Central for Large User Bases?

Scaling Business Central involves optimizing AL code, leveraging SaaS elasticity, and using background processing. Cloud deployments automatically scale infrastructure, but application design must still be efficient.

Heavy operations are moved to background sessions, and long-running processes are broken into smaller tasks. Proper performance monitoring ensures continued scalability.

34. Explain Background Sessions with Example

Background sessions allow tasks to run asynchronously without blocking the user interface. They are commonly used for integrations, batch processing, and heavy calculations.

Session.StartSession(Codeunit::"My Background Task");Using background sessions improves user experience and system responsiveness in high-volume environments.

35. How Do You Avoid Long-Running Transactions?

Long-running transactions increase the risk of locking and deadlocks. To avoid them, developers minimize transaction scope, avoid unnecessary COMMITs, and offload work to background sessions.

Breaking processes into smaller, independent units improves system stability and concurrency.

36. How Do You Handle Data Upgrade in Extensions?

Data upgrades are handled using upgrade codeunits, which migrate data when an extension version changes. These codeunits ensure compatibility across versions without data loss.

Upgrade logic must be idempotent and carefully tested to avoid corruption during deployment.

37. Explain Obsolete States in AL

Obsolete states allow developers to deprecate fields, procedures, or objects without breaking existing code. Attributes such as ObsoleteState and ObsoleteReason guide developers during transitions.

This mechanism supports clean evolution of solutions over time.

38. How Do You Design High-Performance Reports?

High-performance reports use efficient keys, minimal datasets, and filtered data retrieval. Reports should avoid unnecessary calculations and leverage FlowFields carefully.

Advanced report design ensures acceptable performance even with large data volumes.

39. When Should You Use Power BI Instead of Reports?

Power BI is preferred for analytical reporting, dashboards, and cross-system analysis. Business Central reports are better suited for transactional and statutory reporting.

Choosing the right tool improves performance and usability.

40. How Do You Decide Between Customization and Configuration?

Configuration should always be preferred over customization. Customization introduces maintenance overhead and upgrade risk.

Advanced professionals evaluate business requirements carefully before deciding to customize.

41. How Do You Handle Multi-Company and Intercompany Scenarios?

Multi-company scenarios require strict data separation, while intercompany features manage transactions between companies. Proper setup ensures accuracy and compliance.

Advanced understanding of intercompany postings is essential in group organizations.

42. How Do You Manage Technical Debt?

Technical debt is managed through refactoring, documentation, and regular architecture reviews. Ignoring technical debt leads to performance and maintenance issues.

Proactive management ensures long-term solution health.

43. Explain Telemetry and Monitoring in Business Central

Telemetry provides insights into system usage, errors, and performance. Business Central integrates with Application Insights for monitoring.

Telemetry helps identify issues early and supports proactive maintenance.

44. How Do You Troubleshoot Production Issues?

Troubleshooting involves analyzing telemetry, logs, and reproducing issues in sandbox environments. Root-cause analysis is prioritized over quick fixes.

This disciplined approach minimizes risk in live systems.

45. How Do You Design for Long-Term Maintainability?

Maintainability is achieved through clean architecture, consistent coding standards, documentation, and testing.

Solutions designed with maintainability reduce future costs and risks.

46. Explain Differences Between SaaS and On-Prem Architecture

SaaS enforces stricter extension rules, limited customization, and continuous updates. On-premise allows more control but increases maintenance responsibility.

Understanding these differences guides architectural decisions.

47. How Do You Handle Breaking Changes from Microsoft?

Breaking changes are managed by monitoring release notes, testing extensions early, and adapting code proactively.

This reduces disruption during updates.

48. How Do You Manage Performance in Multi-Tenant Environments?

Multi-tenant environments require efficient code and minimal resource consumption. Poor design affects all tenants.

Performance optimization and monitoring are critical responsibilities.

49. How Do You Review AL Code in a Team?

Code reviews enforce standards, performance best practices, and knowledge sharing. They reduce defects and improve overall quality.

Structured review processes are essential in large teams.

50. What Makes a Good Business Central Architect?

A good Business Central architect combines deep technical knowledge with business understanding. They design solutions that are scalable, secure, and maintainable.

Architects make informed trade-offs and guide teams toward sustainable solutions.

Final Notes

Advanced-level interviews test architectural thinking, deep system understanding, and the ability to justify design decisions. Candidates who can explain why a solution is built a certain way stand out significantly in senior-level interviews.

Hot Topics in Business Central